The IOIO Plotter strikes again!

I'll start with a story and move on to some technical detail on my cool new image processing algorithm.A couple of weeks ago I was presenting in East Bay Maker Faire with my friend Al. I was showing off my plotter among other things, taking pictures of by-passers, making their edge-detection-portrait and giving it to them as a gift. At some point, a woman came in, introduced herself as a curator for an art and technology festival called Codame and invited us to come and present our works there.

Frankly, at this point I had no idea what Codame was and pretty much decided not to go. Fortunately, I have friends that are not as stupid as me, and a couple of days later Al drew my attention to the fact that some reading on codame.com and our favorite search engine suggested that this is going to be pretty badass.

OK, so we both said yes, and now we're a week from D-day and I decide that presenting the same thing again would be boring, and anyway edge detection is not artistic enough... I had a rough vision in my head about some random scribbles that would make sense when viewed from a distance, but didn't quite have an idea how to achieve that. But at this point I become mildly obsessed with this challenge, as well as mildly stressed by having to present the thing by the end of the week, so I gave up sleep and started trying out my rusty arsenal of computer vision and image processing tools from days long forgotten.

I got lucky! Two nights later, and there it was. Just like I imagined it!

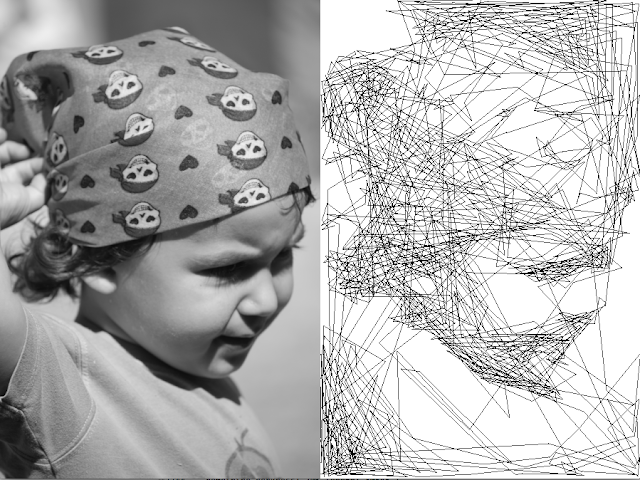

If you step away from your monitor a few meters (or feet if you insist) the right picture will start looking like the left one. And if you zoom in, it looks like nothing really. It's all, by the way, a single long stroke, made out of straight line segments.

From this point, all that was left is to not sleep a few more nights and integrate this algorithm into my Android app that controls the plotter. Barely made it, as usual. The first end-to-end plot saw the light of day in the morning of the opening.

The event was amazing! Seriously, everyone reading this blog who happens to be around San-Francisco should check it out next time. The Codame organization is committed to giving a stage and other kinds of help to works of art that the conventional art institution refuses to acknowledge: art made by code, by circuits, by geeks and by engineers. The exhibits included a lot of video art, computer games and mind-boggling installations as well as tech-heavy performance arts (music and dance).

My plotter worked non-stop and I was on a mission to cover an entire wall with its pictures. By the end of two evenings, covered it was! I even gave away some. The reactions where very positive - watching people stand in front of this piece fascinated and smiling joyfully was worth all the hard work. The first time one of them approached me and asked "are you the artist?" I looked behind me to see who he was talking to. "No", I said, "I'm an engineer". But after the tenth time, I just smiled and said "yes" :)

Now for the geeky part of our show.

How Does It Work?

After struggling with complicated modifications on Hough transform and whatnot, the final algorithm is surprisingly simple. So much so, that I wouldn't be surprised if somebody has already invented it.

The main idea is:

- At every step (generates one segment), generate N (say, 1000) random candiate lines within the frame. Each line can have both its points random or one point random and the other one forced to where the previous step ended, in order to generate a one-stroke drawing line the one above.

- From those N lines, choose the one for which the average darkness it covers in the input image is the greatest.

- Subtract this line from the input image.

- Repeat until the input image becomes sufficiently light.

A small but important refinement is now required. If we do just that, the algorithm will helplessly try to cover every non-white pixel with black and especially will have an annoying tendency to get stuck in any dark area for too long and darken it too much. To fix that, instead of thinking of a black line as a black line of thickness 1, let's think about it as a 20% gray line of thickness 5 (or any other such reciprocal pair). The intuition behind this is that when viewed from far (or down-sampling), a thin black line darkens its entire environment slightly rather than covers a very small area in black.

In practice, an efficient way to implement this is simply to work on an input image resized at 20% and draw 20% gray lines on it. Saves a lot of computations too. The coordinates of the actual output lines can still be of arbitrarily fine resolution, limited only by the resolution of our output medium.

Source code, you way? Here: https://github.com/ytai/ScribblerDemo

Nice job! Wainting to see how the "spline" version looks...

ReplyDeleteReally a nice one..great content and very well written..Thanks

ReplyDeleteVery very cool. Do you have a larger collection of the art posted, or is it all given away?

ReplyDeleteNo, I don't. I have a lot of physical drawings that I haven't pictured. Since I don't have any good use for so many of them, I started giving away any new ones I'm generating.

DeleteFantastic man! My respects!

ReplyDelete